Short Description

Automatically segment the boundary of a nucleus or cell starting from an approximate ROI. It supports 2D and 3D processing and tracking of slowly moving cells. Ideal to study cell morphodynamics.

This book chapter proposes a step-by-step tutorial for the Active Contours plugin with video tutorials (see tutorial 3 to 5). Images used in the tutorial are available on this Zenodo repository.

If you use this plugin in your work, please cite the following reference: “Dufour et al., IEEE Transactions on Image Processing, 2011”. This reference describes the 3D version of the algorithm, but the 2D version works exactly the same.

Documentation

The “Active Contours” plugin is a segmentation and tracking tool that is able to extract the outline of objects in 2D or 3D images and track these outlines over time in a 2D or 3D time-lapse sequence. In a nutshell, an initial contour is drawn (or generated) around an object of interest and then snapped on the object’s border automatically. In a tracking scenario, the final object border is taken as the initial position to segment the following frame, and so forth. Note that the initial contour need to be in 3D to do 3D processing. Note also that tracking use cases require a good time resolution, such that an overlap exists between successive positions of the tracked object.

This documentation is divided in 3 main parts: it begins with a short step-by-step guide and an example to get quickly started. Then, it continues with a description of the plugin parameters, including the choice of implementation and the available parameters. The last part contains explanations about the method, its principle and its numerous implementations and is worth reading to get an in-depth understanding of the plugin.

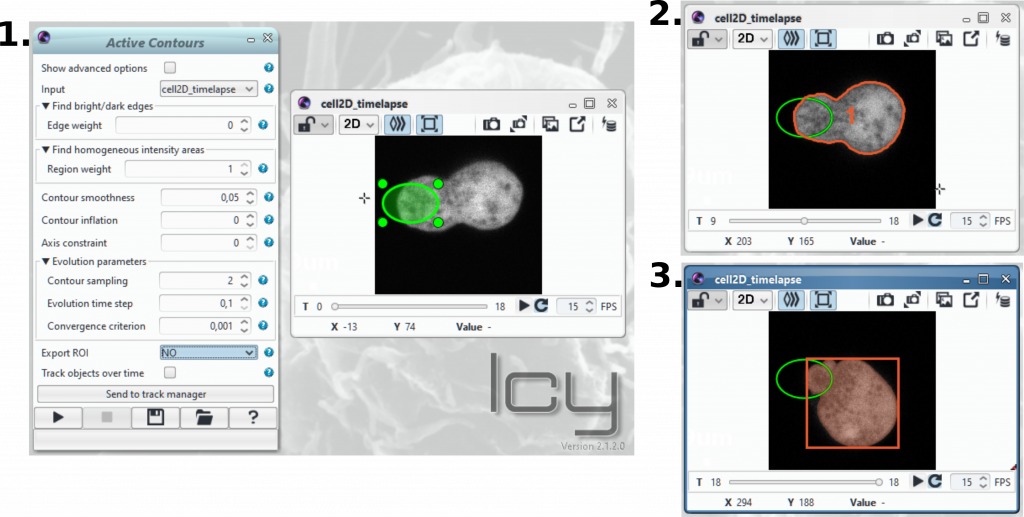

Step-by-step guide for segmentation and tracking with the Active Contours plugin

1) Open an image or video sequence

2) Draw one (or more) region(s) of interest around or across the object(s) of interest. Draw a 3D ROI for 3D processing. You can generate one or more ROI automatically using other plugins such as the “Thresholder” or the “HK-Means”. This should be the preferred way of initialising the method in 3D, until a good 3D ROI editor is implemented in Icy. And this is a good step towards full automation of the workflow.

3) Adjust the various parameters of the method (see the example and explanations below). A small tooltip message will appear under the mouse as you hover each parameter, indicating how to adjust the parameters for each scenario.

4) Select the desired output (no output, export of ROIs on input image, copy of original sequence with detected objects as ROI or labeled sequence)

5) For tracking: first leave the tracking option unchecked; adjust the parameters on the first image; only then check the “tracking” option to process the entire sequence.

6) To display the advanced parameters, check “Show advanced options” at the top of the plugin window

Example of segmentation and tracking of an amoeba

To run this example, download the following video sequence of a moving cell (cell_bleach.tif) and open it in Icy.

Open the active contours plugin, follow the instructions above and use the same parameters as in the video below. You can load the cell_bleach.params file to use exactly the same parameters. You should obtain the exact same results as in the video.

Description of active contours parameters

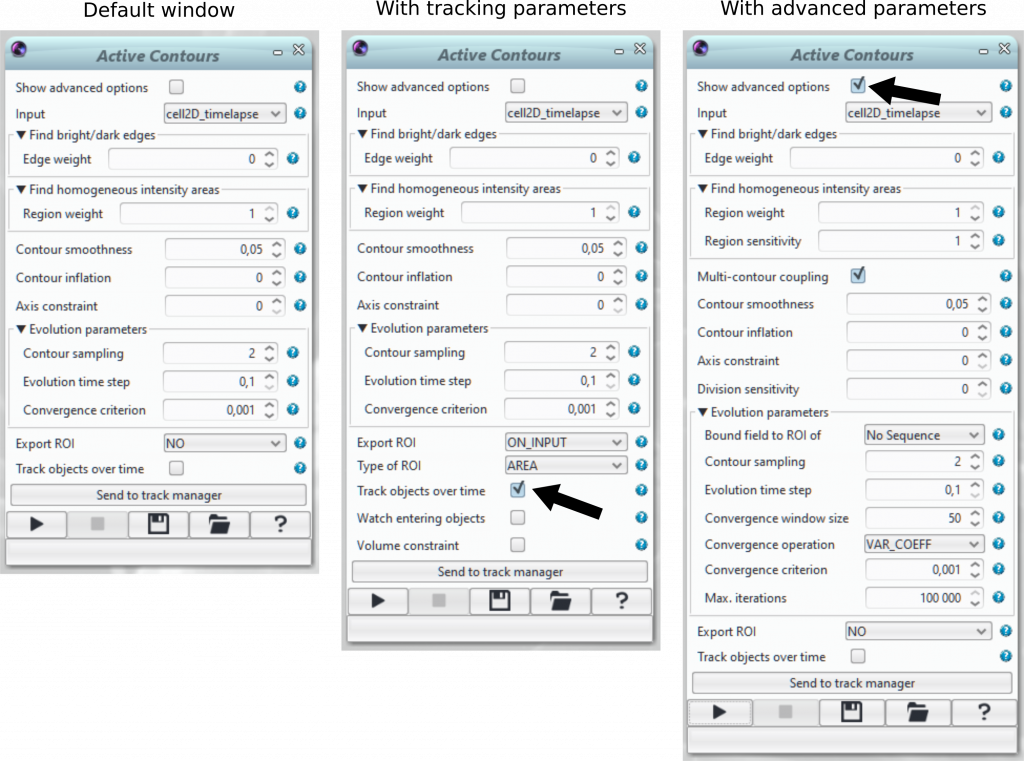

Some parameters are visible only when ticking “Track objects over time” or “Show advanced options”.

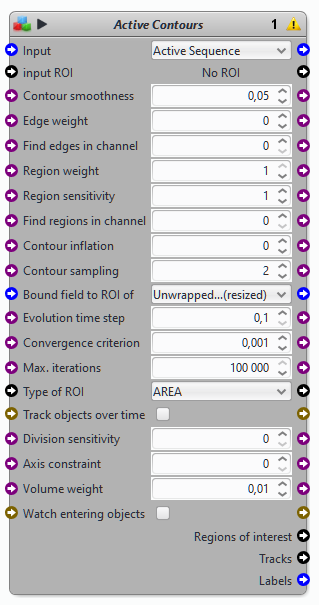

Note also that the Active Contours plugin has a block counterpart.

This plugin implements Multiple Coupled Active Contours in 2D and 3D, according to an energy-minimizing framework with a discrete explicit representation of the contours (polygons in 2D, and triangular meshes in 3D, both with self-parametrisation and topology control). In a nutshell: this plugin works in 2D & 3D, tracks objects over time, handles multiple contours simultaneously, detects divisions, and is pretty damn fast! For more details, read the Method section below.

The contour evolution exploits multiple cues to achieve optimal segmentation (both based on the image and on semantic information). Just like you would improve a cooking recipe by adjusting the nature and quantity of ingredients, the best segmentation is achieved by carefully adjusting the different parameters.

Edge- and region-based information

The most important parameters are the Edge weight and Region weigth. Many markers used in microscopy are specific to membrane structures ie “edges”. Edges can also be present without specific markers, in phase contrast microscopy images for instance. The contour can exploit this edge information to “look” for bright (or dark) edges of a structure, and stop when these structures are reached. The caveat here is that the contour must be already close to its target in order to “snap” to the border correctly (otherwise the contour won’t “see” it). Other types of fluorescent markers highlight non-membrane structures such as the cytoplasm, the nucleus, or other organelles, and delineate regions. Region-based information is a very powerful asset for segmentation and tracking. The Active contours plugin exploits the Mumford-Shah functional to estimate the optimal frontier that segments the regions of interest by maximising the difference between their average intensity and that of the background. Here this also works with multiple regions that do not share the same average intensity (convenient if the staining is not constant from cell to cell). The advantage here is that the contour does not need to be very close to the target to segment it (although being close makes the whole process faster!), and there is no need to have “sharp” edges to find the boundary.

- Edge weight: a value between -1 and 1. Set the edge weight to 0 to not take in account edges in the calculation. Set it to a value different from 0 to take into account edges. The closest this values is from -1 or 1, the more importance you give to edges. Use a negative value for dark edges (low intensities) and a positive value for bright edges.

- Region weight: a value between 0 and 1. Set to 0 to not take region information into account for the evolution of the contour. The closest the value is to 1, the more importance you give to region information.

- Region sensitivity (advanced options): a value between 0 and 3 with default set to 1. Increase sensitivity if the region of interest is weakly stained (for instance, weak DAPI signal) and there is little contrast between the structure of interest and the background.

Contour properties

- Multi-contour coupling (advanced options): each region of interest is segmented (and tracked) by its own contour. This offers a considerable advantage when dealing with objects in contact. When ticked, the algorithm will exploit semantic information and prevent contours from overlapping or fusing when they come into contact, allowing to segregate touching objects, even during prolonged contacts over time. Caveat: extensive prolonged contacts (twisting, swirling) may not be handled properly, as the actual boundary between objects is not necessarily clear, even for the human eye!

- Contour smoothness: as the image data may be quite noisy (especially in low-light condition), a geometrical constraint is usually imposed to maintain a certain level of smoothness to the deforming contour. You can use less smoothness on clean data, more on noisy data. Note that pushed to the extreme, this smoothness constraint will cause the contour to progressively shrink and eventually disappear, so use wisely!

- Contour inflation (a.k.a. “balloon force”): should you opt for edge information, and if your contour is too far away from the target, an arbitrary inflation force can be applied to the contour such that it will permanently shrink (if <1) or inflate (if >1) with a certain speed. This should be used minimally and with care, as this should only help the contour find real edges, and not prevent it from staying there.

- Axis constraint: default value to 0 (no axis constraint). This parameters helps to further assist in separating objects in contact by imposing that contours preferentially deform along their major axis (if there is one). Use axis constraint with caution.

- Division sensitivity (advanced options): default value is 0. Increasing this value will help detecting object division / separation when they are close to each other.

Evolution parameters

Finally, the evolution of the contour itself (i.e. the minimization of the underlying mathematical functional) also has its set of parameters which can be adjusted. These have more to do with the total execution speed of the segmentation / tracking process, here again with pros & cons:

- Bound field to ROI of (advanced options): constraints to keep the contour within the boundary of specified ROI

- Contour sampling: default value to 2 (each point on the contour is 2 pixels away from its neighbor). Contours used in this plugin are represented as a set of geometrical primitives (segments in 2D, triangles in 3D) connecting so-called “control points”. The average separation between these control points can be adjusted, noting that a smaller distance results in more precise (but slower) computations, while a larger distance results in faster computation, with the risk of losing structures smaller than this sampling step.

- Evolution time-step: although the contour may seem to move magically and smoothly, it actually is advancing by very small jumps in the image. The size of this jump can be adjusted to move faster to the solution (from default value of 0.1 to 1 if signal is sharp enough), with the immediate caveat that moving too fast may cause instabilities to the contour (it may oscillate around the optimal solution, and sometimes it will have issues when dealing with other touching objects)

- Convergence window size (advanced options): number of iterations on which the algorithm checks if convergence operation (for instance the variance) is below a certain criterion (convergence criterion) or not. Modify with caution.

- Convergence operation (advanced options): the operation used to determine the convergence of the contour. Possible operations are:

- NONE: no convergence computation (will use Max. iterations setting to stop contour evolution)

- MIN: minimum contour size

- MAX: maximum contour size

- MEAN: mean contour size

- SUM: sum of contour size

- VARIANCE: variance of contour size

- VAR_COEFF: coefficient of variance (variance / mean) of contour size (default)

- Convergence criterion: once the contours have found their target, in most cases they will (or rather, seem to) naturally stop moving. Mathematically speaking, this means that a steady-state solution to the problem has been found, but this state is only steady “in theory”. In practice, there are always minor oscillations around this solution, and they are detected by measuring the overall movement of each contour, stopping them if they fall under a reasonably low value. Modify with caution.

- Max. Iterations: maximum iteration number for the contour evolution. Useful if you don’t want to rely only on the convergence criterion to stop the contour evolution. Default value is 100000.

Tracking options

When ticking “Track objects over time”, two more parameters appear: Watch entering objects and Volume constraint.

- Volume constraint: in some cases (particularly in contact situations, as described above), the separation between touching objects is challenging. This plugin has the option to enforce that each tracked object preserves a “roughly” constant volume over time (fluctuations are allowed though), in order to improve tracking (this assumption is highly debatable from a biological perspective, and definitely wrong in 2D, but might solve tricky situations in 3D). This parameter is weighted between 0 and 1. A value of 0 means no control over the volume of the contours. A value of 1 means that between two time points of the sequence the contours should keep the same volume. Note: Use with caution. This parameter is highly sensitive and should only be used when contours disappear or get reduced due to contour contact. It is disabled by default.

- Watch entering objects (advanced options): enabling this option allow to detect new objects entering the field of view when tracking is used. Internally the plugin will process HK-Means segmentation and retains new detected objects with similar size than current actives contours. Note that you can put initial ROI(s) on a specific time point so the contour tracking will start at this position.

- Send to track manager: when the tracking process completed, you have the opportunity to click on this button to send the tracking data to the Track Manager and so perform your tracking analysis.

Export options

When contours evolution is done, the plugin can export the results in different format depending what suit better.

- Export ROI: export format of the contours. Possible values are:

- NONE: no export – contour(s) remains visible as a layer (default)

- ON_INPUT: contour(s) are exported as ROI(s) on the original image.

- ON_NEW_IMAGE: contour(s) are exported as ROI(s) on a image copy.

- AS_LABELS: contour(s) are exported as a labeled image.

- Type of ROI (only when Export ROI = ON_INPUT or ON_NEW_IMAGE):

- AREA: contours are exported as Area (boolean mask) ROI(s).

- POLYGON: contours are exported as Polygon (geometric shape) ROI(s)

Method

The principle of deformable models is to define an initial curve (2D or 3D, opened or closed) in the vicinity of an object of interest, and let this curve deform until it reaches a steady-state when it fits the boundary of the target. Although this looks like black magic at times, there are sound underlying mathematics involved. The curve and its deformation can be expressed in many different ways, which I describe below.

Curve representations

Curve representations are presented here in (more or less) chronological order (rather than by group) to give a coherent view of the evolution in the field over the last decades. This review is however not exhaustive and only gives the main lines of research for the general reader, directed toward the purpose of the plugin (cell segmentation and tracking).

- Explicit parametric representation The original scheme (originally developed by Kass & Terzopoulos in 1987) is to represent the curve by a parametric equation. The parameters of this curve are adjusted during the deformation, and the curve is regularly reparameterized to ensure global smoothness. This model is known as the “Snake” model, since the evolution of an opened curve may mimic the undulations of a snake. Such model is fast and computationally efficient, although usually criticized for two main reasons: 1) their lack of topological flexibility (a parametric curve cannot be split), therefore a single curve must be created for each object of interest to detect; 2) the extension to 3D is quite delicate in terms of curve manipulation and reparameterization.

- Implicit level set representation Almost coincidentally, other researchers from the field of fluid dynamics (namely S. Osher and J. Sethian in 1988) derived an alternative representation of the curve, by defining it as the zero-level of a higher-dimensional Lipschitz function. One metaphor of this representation is a flame (the fluid) burning a hole through a piece of paper. The boundary (2D) of the hole is the zero-level of the flame function (3D), and the deformation of the hole’s boundary is implicitely controlled by the motion of the flame. For this reason this model is usually termed “implicit model”. This model has received extensive attention from the community, since it solves most of the drawbacks of the Snake model: 1) the model is topology-independent (the fluid function may evolve such that the zero-level actually appears as two distinct contours), hence a single level set function may be used to detect multiple non-touching objects in the image; 2) the formalism naturally works in any dimension. Yet, these advantages also come at a significant computational cost (notably in 3D, where the level set function is 4D). Moreover, for the purpose of cell segmentation and tracking, the topological flexibility can become a drawback: indeed, since topology is uncontrolled by nature, objects moving and eventually touching over time will see their zero-level merged together, such that their identity is lost. Many methods have therefore focused on re-inserting topology control in these approaches.

- Explicit discrete representation With the improvement in computer graphics technology and in the Computer Aided Design (CAD) industry, extensive development has been conducted on discrete curve representations. The goal of these representations is to take the best of both worlds with 3 key features: 1) inherit the speed and computational efficiency of the parametric representation; 2) incorporate the topological flexibility of implicit representation; 3) rely on a discrete data structure in order to benefit from the power of graphics processing units for computation and/or rendering purposes. As a result, the computational cost in both time and memory load can be decreased by up to several orders of magnitude as compared to an equivalent level set representation.

- Graph-cut representation Graph-cut representations rely on the theory of minimal path finding in a connected graph, and has only recently been applied in the context of deformable model-based segmentation. This representation is usually compared to the level-set approach, but considers the curve as the minimum cut of a flow that connects all image pixels to either an imaginary sink or target source. The major difference with the level set models lies in the mathematical description of the curve evolution, which is discussed later below.

Curve evolution

Once the contour representation is fixed, one must choose a mathematical framework to actually drive the deformation of the curve from its initial position toward the boundary of the object of interest. Here again, several solutions are available, the most popular ones cited below in arbitrary order.

- Dynamic mass-spring systems. Such systems consider the curve to be discretized into a set of physical nodes connected with springs, and that the entire evolution of this physical system follows the Newton laws of motion. By applying attracting and repulsing forces on the various nodes of the system (using the image data to guide them in the correct direction), the curve deforms until it reaches a physical steady-state, assumed to correspond to the solution, although there is no guarantee on its exactness.

- Energy-minimizing frameworks. These frameworks rely on the definition of an energy functional that combines terms related either to the image data (usually referred to as “data attachment” terms), to geometrical properties of the curve (usually termed “regularization” terms), or to prior estimates of the solution when available. The key idea is to define the functional such that the solution of the segmentation problem (i.e. the optimal position of the curve) is a minimizer of this functional. These frameworks are particularly popular thanks to their flexibility, while minimization per se can be conducted using a wide variety of numerical schemes, one of the most popular being the Euler-Lagrange steepest gradient descent, which guarantees convergence to at least a local minimum of the functional.

- Statistical frameworks. These frameworks are very similar to the energy-minimizing ones, however the the optimal solution is defined by maximizing the probability of a given gain function, which is formed of terms analogous to those of the previous case. The advantage here is that the incorporation of shape priors is facilitated by the statistical formulation of the problem. The notion of convergence to a local minimum is complemented by a statistical measure of the correctness of fit of the original terms.

- Energy-minimizing graph-cuts. The use of graph-cuts for deformable models has mostly been motivated by the high computational cost and convergence time of energy-minimizing (ot statistical) frameworks, based on the observation that steepest gradient descent approaches may take a very high number of iterations to converge, yielding substantial computational costs, notably in the case of level set approaches. The idea here is to minimize an energy functional defined on a graph constructed from the image grid, such that each image pixel (or voxel in 3D) is connected to its neighbors by an edge with a cost, while one set of nodes are connected to an additional imaginary “source” node, and the remaining nodes to a “target” node. This graph is then cut with minimal cost using a principle of maximal flow algorithm. The advantage is a substantial gain in convergence time (the number of iterations is up to several order of magnitude lower than in Euler-Lagrange minimization), at the cost of a more complex incorporation of energy terms, which need to be expressed in terms of graph edge costs.

6 reviews on “Active Contours”